HPE Compute

GDT, a global partner in digital transformation journeys, accelerates client digitalization and business goals by transforming and modernizing client platforms, networks, and security. As a partner with HPE since 2020, our alliance brings together technology, services, and partnerships for at-scale compute solutions.

The accelerating pace of change, according to Ray Kurtzweil, is reflected in the fact that it took 8000 years for the agricultural revolution to be perfected and only 9 years for the human genome to be sequenced. In a similar manner, over a short amount of time, there has been exponential growth in computing power. According to Time Magazine, since 1900, computer technology has been climbing dramatically by powers of 10, and it is now progressing more each hour than it did in its entire first 90 years. By the year 2045, computing capabilities are expected to surpass the brainpower of all human brains combined.

With the rapid rate of technology development, it is challenging for companies to keep up with the required expertise. In fact, in 2021, 41% of companies claim to lack the appropriate analytical skills, according to Statista, and 50% of organizations lacked sufficient AI and data literacy skills to achieve business value, according to Gartner. Artificial Intelligence exacerbates this demand for expertise in computing, analytics, and data storage.

There is also an explosion of high quality data from all types of applications -- from personal, like social media, to professional, like the MedTech sector. At the same time, the advancements of hardware democratize the use of cloud computing, and the advent of GPUs increases AI training speeds with less power consumption and lower costs.

There also have been significant advances in software -- sophisticated algorithms and networks have user-friendly interfaces that facilitate access to all sorts of data without the need for experts such as data scientists, architects, or engineers.

It is getting increasingly easier to create data, and what started as small scope processing, has become large-scale, resulting in heavy data center workloads. More efficient processes need to be built out, utilizing data lakes to receive information from multiple sources, data engineering and modeling to make the data meaningful, and data visualization to simplify analyses.

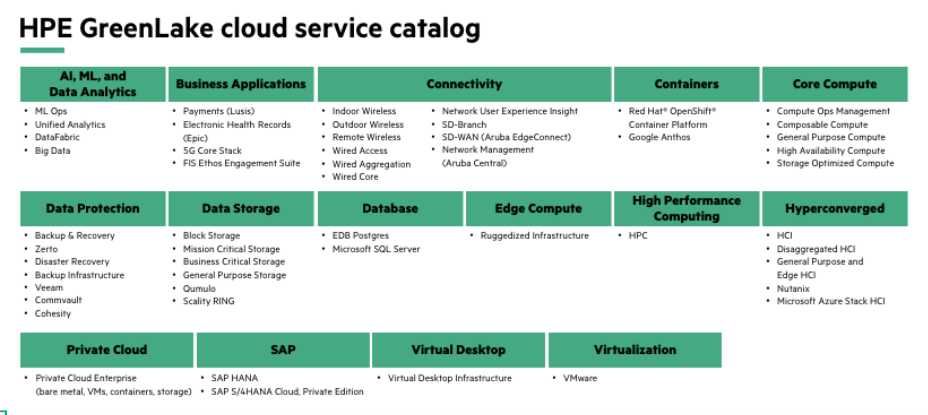

75% of all new data is created at the edge, and data manipulation requires an end-to-end data pipeline, from the IoT (Internet of Things) to Fast Data to Big Data to AI. HPE has been acquiring such technology since 2014, and GreenLake has compute for training models in the data center, compute for inference at edge, edge analytics, and inference engines. It also has AI software frameworks and AI/ HPC storage. HPE’s services for ML Ops (Machine Learning Operations) cover data ingestion, data processing, AI/ML, data lake, and data visualization. It is a relatively simple, integrated system within an extremely complex eco-system.

HPE is one of the leaders in private cloud with its GreenLake offering, compute services, and artificial intelligence. If you would like more information on how GDT can service your company’s needs with HPE solutions, Contact us